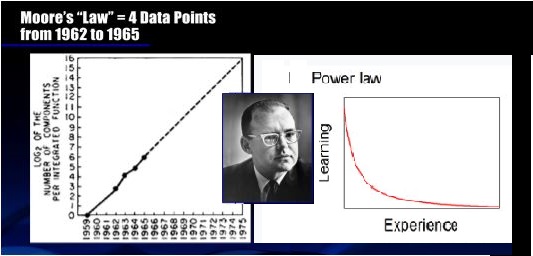

Previously, we talked about the Tic-Toc of computing at Intel, and how Gordan’s law (Moore’s law) of computing – 18 months to double speed (and halve price) – is starting to hit a brick wall (Outa Time, the tic-toc of Intel and modern computing). Breaking through 14 nanometer barrier is a physical limitation inherent in silicon chips that will be hard to surpass. Ed Jordan’s dissertation addressed this limit and his Delphi study showed what the next technology might likely be, and how soon it might be viable. His study found that several technologies were looming on the horizon (likely less than 50 years)… and that organic (i.e. proteins) was the most promising, and should certainly happen sometime in the next 30 years.

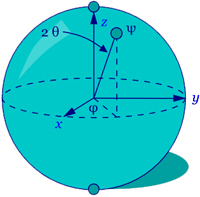

Apparently quantum computing technology is here and now– kinda – especially at Google. See Nicas (2017) WSJ article about Quantum computing in the Future of Computing. As the article states about the expert Nevens, he’s pretty certain that no one understands quantum physics. At the atomic level, a qubit can be both on and off, at the same time. The conversation goes into parallel universes and such… Both here and there, simultaneously. The Quantum computer is run in zero gravity, at absolute zero temperature (give or take a fraction of a degree). Storage density using qubits is unimaginable. The computer works completely differently, however, based on elimination of the non-feasible to arrive at good answers, but not necessarily the best answer. Heuristics, kinda. The error rate is humongous, apparently, requiring maybe 100 qubits in error correction associated with a single working qubit.

Ed Jordan was reminiscing about quantum computing yesterday… “Basically, all computing in all its permutations need to be rethunk. Quantum computing is sort of the Holy Grail. One could argue it is sort of like control fusion: always just 10 years away. Ten years ago, it was 10 years away. Ten years from now it may still be ten years away. There is a truck load of money being thrown at it. But there isn’t anything mature enough yet to do anything that looks like real computing. The problem is how do you read out the results? Like Schrödinger’s cat, that qubit could be alive or dead, and by looking at it you cause different results to happen – as opposed to something that exists independent of your observation.”

Quantum computing is now moving past the technically impossible into the proved and functional, and maybe soon to be viable. The players in this market are Google (Alphabet), IBM and apparently the NSA (if whistle blower Snowden is to be believed.)

Intel may not be able to capitalize on the next generation of computing. Some computations, such as breaking encryption, can probably be done in a couple seconds on a quantum computer, even though it might take multiple current silicone computers a lifetime. There are several potential uses of the quantum computer that make businesses and security targets very nervous.

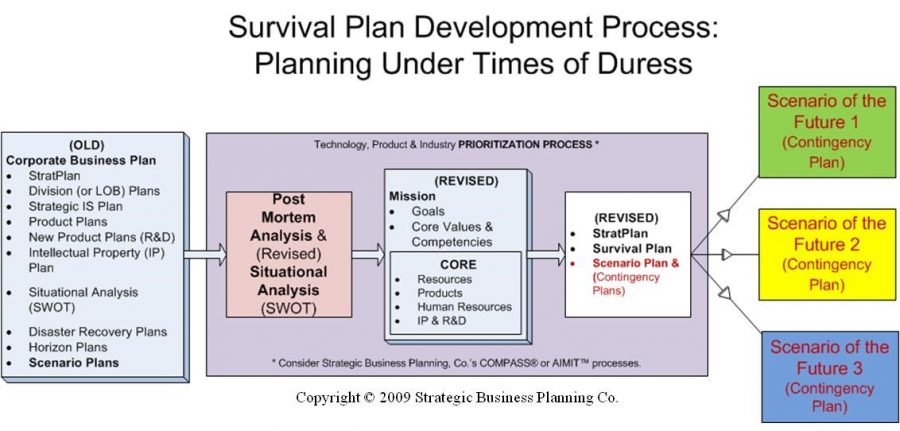

Jordan and Hall (2016) talk about using Delphi to anticipate deflection points that are possible on the horizon, including those scenarios that would be possible via quantum computing, or bio-computing for that matter. The use of experts or informed people could make the search for such deflection points more evident, and the ability to develop contingency plans more effective.

One of the most interesting things in the Nicas article is a look at the breakthroughs in computing technology, and comparing them to Jordan’s 2010 dissertation. He found that two or three types of technology should likely be feasible within 25 to 40 years and viable in application within about 30 to 50 years. In his case that would be as early as about 2040. Note that the experts discussed by Nicas were pegged to have full application of a quantum computer by about 2026; that is when digital security will take on a whole new level of risk. It also makes you wonder how block-chain (bitcoin) will fare in the new age of supersonic computing.

This seems like a great time to start working of security safeguards that are not anything like the current technology? Can you imagine the return of no-tech or lo-tech? Kinda reminds you of the revival of the old “brick” phones for analog service (in the middle of the everglades).

References

Debnath, S., Linke, N. M., Figgatt, C., Landsman, K. A., Wright, K., & Monroe, C. (2016). Demonstration of a small programmable quantum computer with atomic qubits. Nature, 536(7614), 63–66. doi:10.1038/nature18648

Jordan, Edgar A. (2010). The semiconductor industry and emerging technologies: A study using a modified Delphi Method. (Doctoral Dissertation). Available from ProQuest dissertations and Theses database. (UMI No. 3442759)

Jordan, E. A., & Hall, E. B. (2016). Group decision making and Integrated Product Teams: An alternative approach using Delphi. In C. A. Lentz (Ed.), The refractive thinker: Vol. 10. Effective business strategies for the defense sector. (pp. 1-20) Las Vegas, NV: The Refractive Thinker® Press. ISBN #: 978-0-9840054-5-1. Retrieved from: http://refractivethinker.com/chapters/rt-vol-x-ch-1-defense-sector-procurement-planning-a-delphi-augmented-approach-to-group-decision-making/

Nicas, Jack (2017, November/December). Welcome to the quantum age. The future of Computing in Wall Street Journal. Retrieved from: https://www.wsj.com/articles/how-googles-quantum-computer-could-change-the-world-1508158847