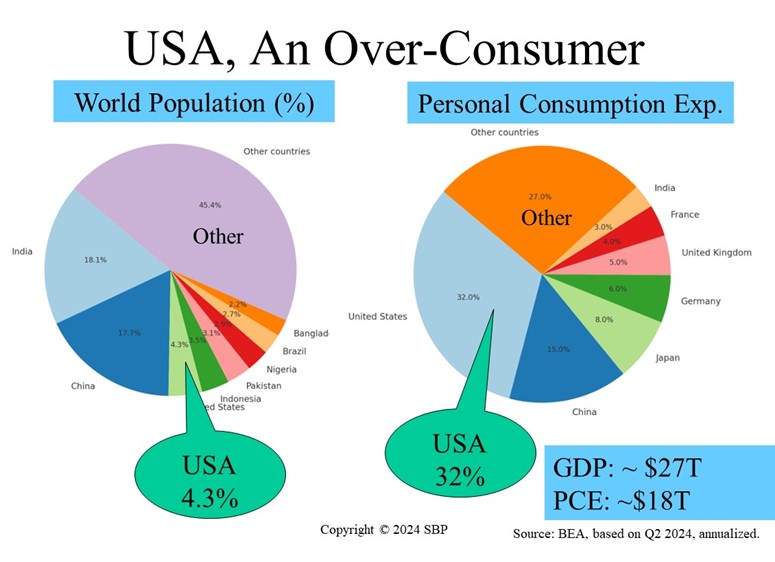

Despite comprising just 4.3% of the global population, the U.S. accounts for a staggering 32% of worldwide personal consumption. This statistic highlights the significant economic weight of American consumers, who drive demand both at home and abroad. However, it also underscores a profound social responsibility: as a dominant force in global consumption, U.S. consumers have the power to influence market trends, sustainability practices, and social equity. The choices made by American shoppers not only shape the economy but also have far-reaching implications for the planet and future generations.

Source: International Monetary Fund; United Nations as of December 2023.

Assistance of ChatGPT and Gemini (2024, Aug) with prompts by E. Hall.